Published on

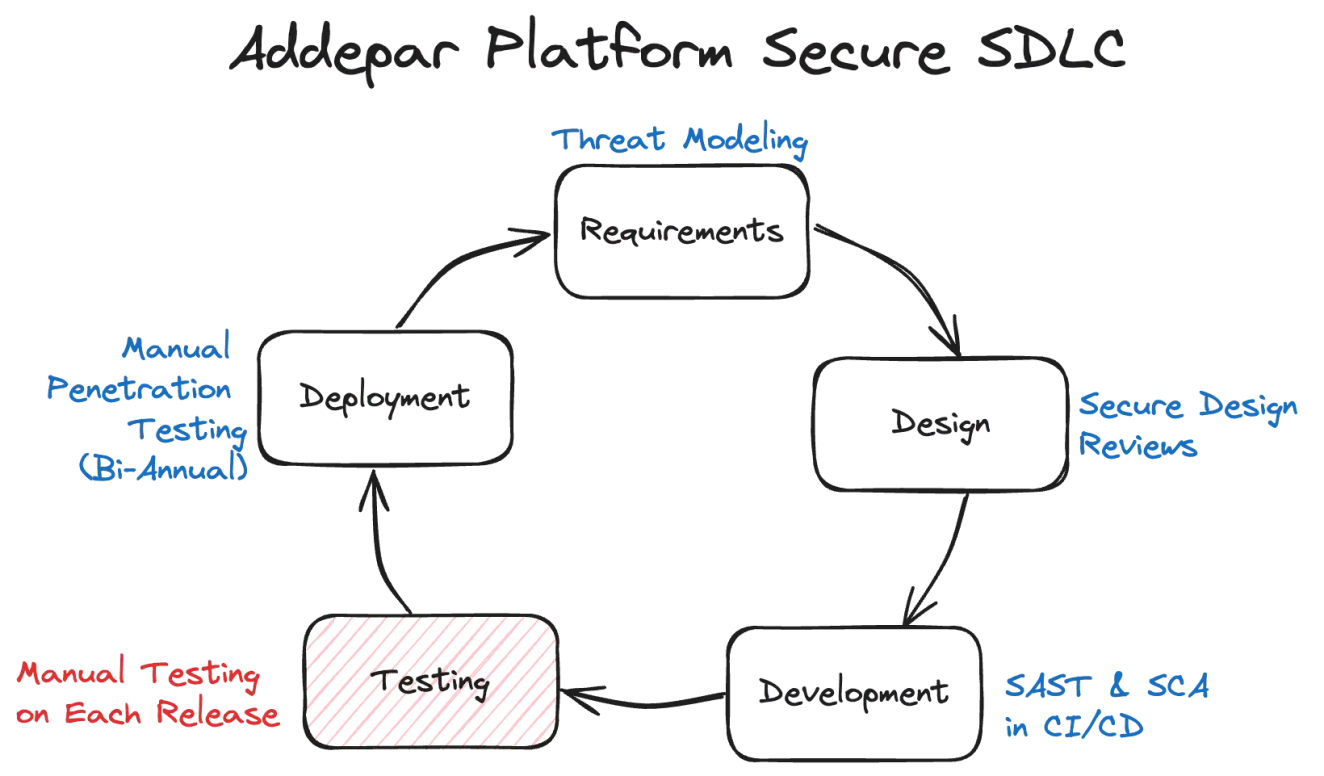

At Addepar, we are committed to incorporating security as early as possible in our Secure Software Development Lifecycle (SSDLC). As part of this strategy, we employ automated security checks in our CI processes, including code scanning (SAST, IaC, and Configuration), library scanning (SCA), secrets detection, and more. We also conduct comprehensive biannual manual tests on our core product, the Addepar Platform, as our experience consistently shows that manual security testing is essential for identifying business logic vulnerabilities and chained attacks, where multiple low-severity issues might combine to pose a high-severity risk.

However, a long-term goal of ours has been to implement manual security testing for every bi-weekly Platform Release Candidate (RC). This would allow us to detect more vulnerabilities before they reach production, making remediation more cost-effective. However, achieving this requires overcoming several challenges, primarily the time constraints of performing complex assessments within a fixed release cycle.

This need led to the creation of RedFlag, a tool that leverages AI to revolutionize our approach to security scoping and manual testing.

Platform release candidates are challenging

It turns out that performing security testing on Platform RCs before deployment is an enormous challenge:

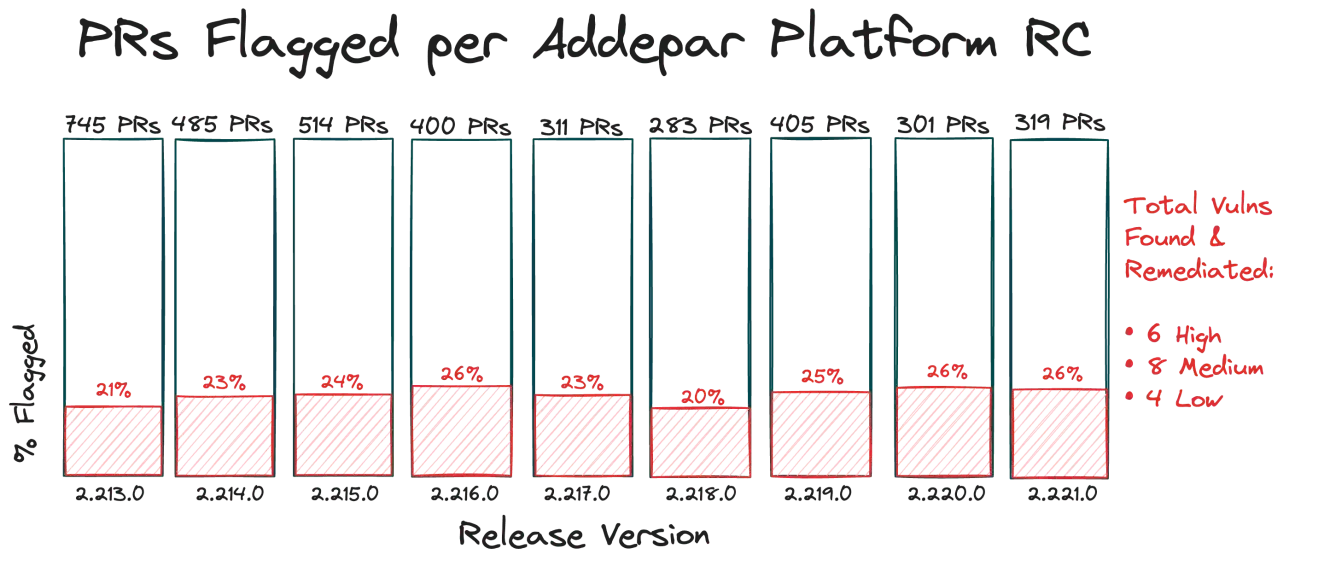

Large RCs: On average, each Platform RC includes over 400 git Pull Requests (PRs), making comprehensive scoping and testing a daunting task.

Rapid release cycle: With a two-week release cycle, vulnerability identification and remediation must happen quickly and efficiently.

Limited security insights: Identifying what needs to be tested from a security perspective based solely on general release notes is difficult.

Resource constraints: As with any team, we have limited bandwidth and many competing services and products to test.

We needed a way to focus the scope of our Platform tests on high-risk areas rather than performing a full, end-to-end assessment. Doing this manually would also be a time-consuming task in itself, as it would involve going through each PR and determining its scope and whether it warrants security testing.

How RedFlag transformed our security testing

RedFlag streamlines the testing process by clearly defining the scope for each Release Candidate. This allows for more focused testing and optimizes resource allocation.

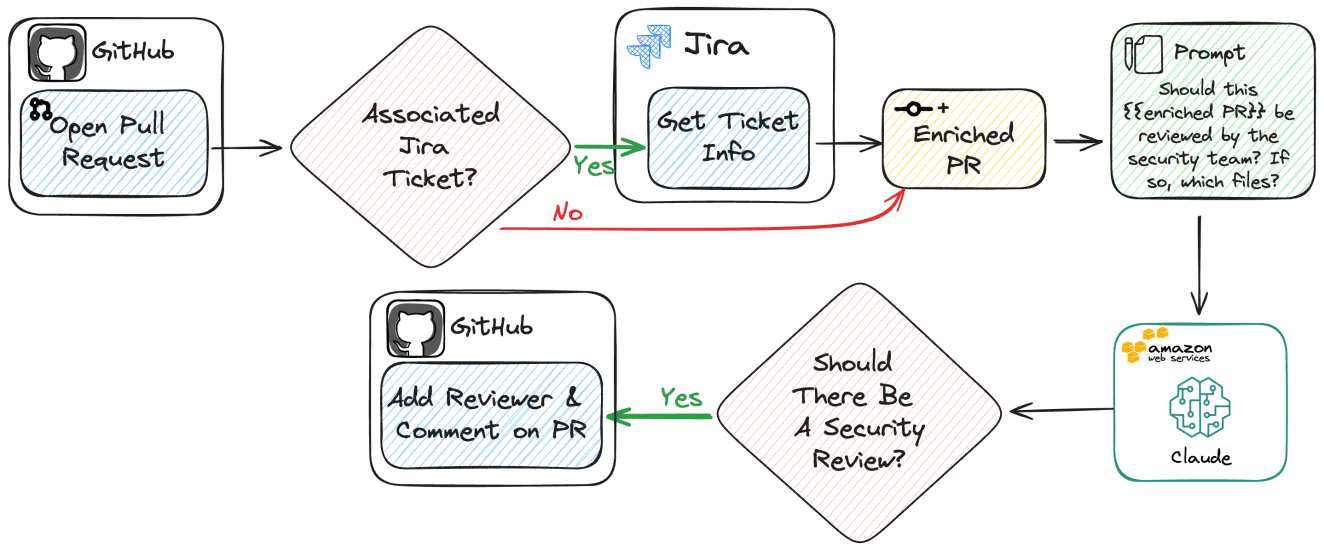

Leveraging Anthropic’s Claude v3 model via Amazon Bedrock, RedFlag analyzes each PR in an RC, enriches it with related Jira ticket information, and determines if it’s worth a manual security review. RedFlag can be used as a CLI tool with RCs, or directly in CI pipelines as a GitHub action. RedFlag does not require any model training or fine-tuning as it utilizes In-Context Learning exclusively.

Using RedFlag, Addepar’s Offensive Security team can scope an entire Platform RC with hundreds of PRs and determine what needs to be tested and how it should be tested in just 10 minutes. This focused scope unlocks the ability to test each RC in a reasonable timeframe.

Workflow for release candidates

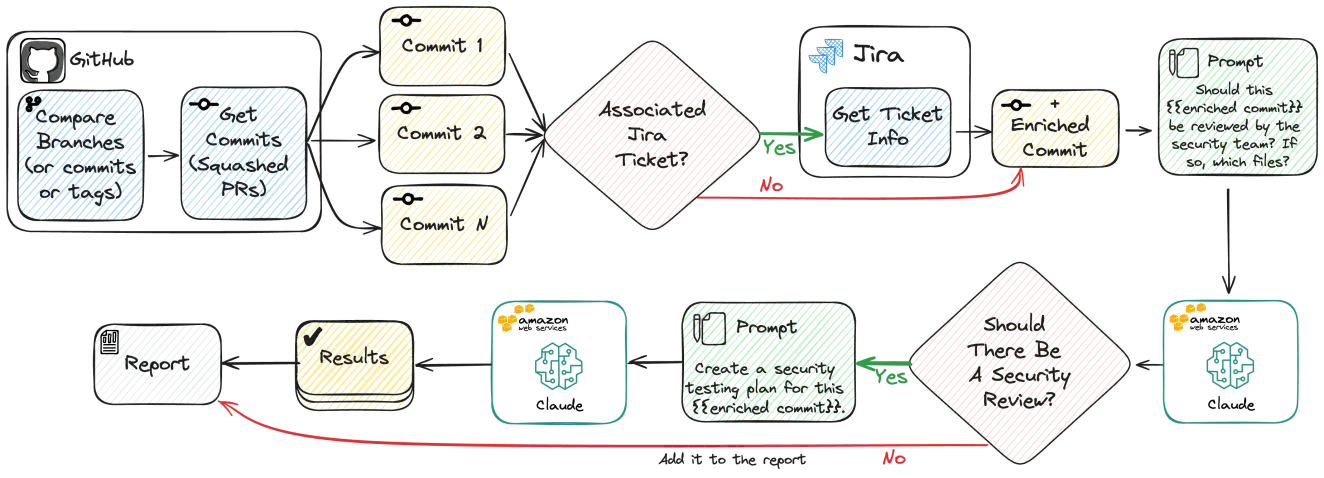

Here’s how RedFlag works to scan a full RC for high-risk commits.

Starting from the top left, RedFlag compares user-specified branches and grabs all of the relevant squashed PR commits, including titles and descriptions. If a PR has an associated Jira ticket, RedFlag uses the Jira API to enrich the PR with additional context from that ticket. This information is then fed into an LLM prompt that asks Claude v3 to determine if the PR warrants manual review by the security team.

If RedFlag identifies a PR as high-risk, it also generates a security test plan that outlines specific attack vectors and risky code. This is all then compiled and output into an HTML-based report, shown below.

The RedFlag output allows our team to view flagged PRs and useful information, such as a summary of the LLM analysis, PR descriptions, linked Jira ticket information, and a list of modified files.

Using this report, we consider each flagged PR a part of the assessment scope and mark them complete after manual review. This streamlined process has enabled us to conduct incremental assessments on each Platform RC in a matter of days, allowing us to perform security testing alongside QA processes.

Result validation

From the beginning, it was important to us that we were able to trust the results of RedFlag. This means preventing vulnerabilities from slipping through, and only getting picked up in a biannual test. To address this, we built an evaluation framework, enabling rapid prompt iteration, based on real Addepar data.

{

"repository": "Addepar/Platform",

"commit": "abcabcabcabcabcabcabcabcabcabcabcabca",

"should_review": false,

"reference": "It updates the instance AMI to a larger instance and does not affect application code."

},

{

"repository": "Addepar/Platform",

"commit": "0123456789012345678901234567890123456789",

"should_review": true,

"reference": "This PR introduces a new API endpoint within the InternalFileName.java file."

}The above example JSON evaluation file enables RedFlag to undergo its standard commit enrichment process. However, instead of generating a report, it employs a chain-of-thought question/answer evaluation prompt with Claude. This allows us to compare the LLM's output with our correct responses, thereby assessing the accuracy of our prompts.

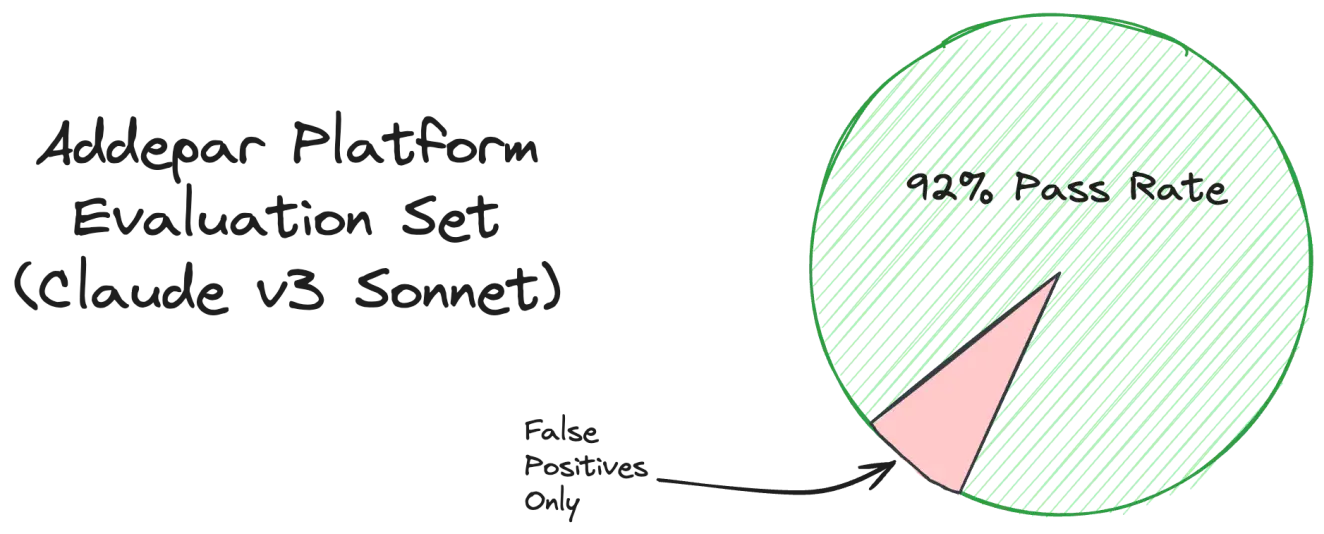

Using a custom evaluation dataset of previously reviewed Platform PRs, our current prompts achieve a 92% pass rate, with the only incorrect responses being false positives. Our prompts are specifically designed to avoid false negatives and to err on the side of caution. We currently use the Claude v3 Sonnet model but plan to experiment with Claude v3 Opus. Given RedFlag's comprehensive configuration options, this change will be simple to evaluate and implement with the framework above.

Real-world results

RedFlag reduced the number of PRs we need to review per RC from an average of about 400 to fewer than 100, making the scope of our security assessments much more manageable.

The results are tangible as the OffSec team has officially tested the last nine RCs, resulting in the identification of six high severity issues that were fully remediated before the release was deployed. Utilizing Claude v3 Sonnet, RedFlag incurs an average cost of $0.02 per commit review, amounting to about $8 for an entire Addepar Platform RC of 400 PRs.

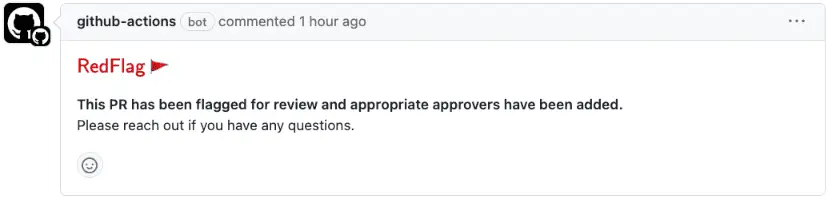

Shifting further left: RedFlag in CI

RedFlag also integrates into CI pipelines at the PR level. It can evaluate individual PRs, enhance the context with associated Jira ticket details and assess them using the LLM. If a PR is flagged, RedFlag automatically adds designated reviewers and posts a comment to notify the PR authors. This approach enables potential issues to be addressed early in the CI pipeline before new code is deployed.

Just like the CLI functionality, most of RedFlag is configurable using this PR workflow - from the reviewers to the PR comment.

Versatility beyond security

While we built RedFlag for our security testing needs, its flexible configuration makes it a versatile tool for a variety of teams and processes. For example, just by modifying RedFlag's LLM prompts, it could be repurposed in minutes by teams such as:

Quality assurance (QA): By configuring RedFlag to identify PRs that impact critical parts of the application, QA can prioritize their manual testing efforts, focusing on areas most likely to introduce defects or regressions.

DevOps: RedFlag can be utilized by DevOps teams to monitor and enforce best practices in code deployment and infrastructure changes. For example, it can be set up to flag high-risk changes in deployment scripts or configurations that might affect system stability, scalability, or efficiency.

Product management: RedFlag can be tailored to assist product managers by analyzing RCs to check their alignment with product roadmaps and feature requirements. This ensures that development efforts are consistently in line with strategic goals and that any deviations are flagged for review.

RedFlag is open source

Ready to empower your team with RedFlag's capabilities? Here's how to get started:

Quick setup: Visit the RedFlag repository on GitHub and follow the quick-start instructions in the README file to integrate RedFlag into your environment swiftly.

Immediate scanning: With the default setup, you can begin scanning your projects in just a few minutes using a single command.

Utilize GitHub Actions: RedFlag includes a GitHub Action that can be implemented across your repositories for automated PR evaluations.

Join the community: We encourage you to contribute to the development of RedFlag. Feel free to submit feature requests via GitHub Issues or enhance the tool with your own pull requests.

Thank you for taking the time to read about our journey in building RedFlag. We look forward to hearing about your own success stories!